Mathematical and Computational Sciences Division

Summary of Activities for Fiscal Year 1999

Information Technology Laboratory

National Institute of Standards and Technology

Technology Administration

U. S. Department of Commerce

January 2000

Abstract

This document summarizes activities of the ITL Mathematical and Computational Sciences Division for FY 1999, including technical highlights, project descriptions, lists of publications, and examples of industrial interactions.

Questions regarding this document should be directed to Ronald F. Boisvert, Mail Stop 8910, NIST, 100 Bureau Drive, Gaithersburg, MD 20899-8910 (

[email protected]).

Photo captions

(clockwise from top):

|

Table of Contents |

Part I: Overview

|

Part I Overview |

Micrograph of a thermal barrier coating (top left) and its realization in OOF.

Background

The mission of the Mathematical and Computational Sciences Division (MCSD) is as follows.

Provide technical leadership within NIST in modern analytical and computational methods for solving scientific problems of interest to U.S. industry. The division focuses on the development and analysis of theoretical descriptions of phenomenon (mathematical modeling), the design of requisite computational methods and experiments, the transformation of methods into efficient numerical algorithms for high-performance computers, the implementation of these methods in high-quality mathematical software, and the distribution of software to NIST and industry partners.

Within the scope of our charter, we have set the following general goals.

With these goals in mind, we have developed a technical program in three general areas.

The first area is done primarily via collaborations with other technical units of NIST. Projects in the second area are typically motivated by internal NIST needs, but have products, such as software, which is widely distributed. The third area reflects work done primarily for the computational science community at large, although NIST staff benefits.

We have developed a variety of strategies to increase our effectiveness in dealing with such a wide customer base. We take advantage of leverage provided via close collaborations with other NIST units, other government agencies, and industrial organizations. We develop tools with the highest potential impact, and make online resources easily available. We provide educational and training opportunities for NIST staff. Finally, we select areas for direct external participation that are fundamental and broadly based, especially those where measurement and standards can play an essential role in the development of new products.

Division staff maintain expertise in a wide variety of mathematical domains, including linear algebra, special functions, partial differential equations, computational geometry, Monte Carlo methods, optimization, inverse problems, and nonlinear dynamics. Application areas in which we have been actively involved in this year include materials science, fluid mechanics, electromagnetics, manufacturing engineering, construction engineering, wireless communications, optics, bioinformatics, image analysis and computer graphics.

Output of Division work includes publications in refereed journals and conference proceedings, technical reports, lectures, short courses, software packages, and Web services. In addition, MCSD staff members participate in a variety of professional activities, such as refereeing manuscripts and proposals, service on editorial boards, conference committees, and offices in professional societies. Staff members are also active in educational and outreach programs for mathematics students at all levels.

Overview of Technical Program

A list of recent activities in each of the division focus areas follow. Note that individual projects may have complementary activities in each of these areas. For example, the micromagnetic modeling work has led to the OOMMF software package, as well as to a collection of standard problems used as benchmarks by the micromagnetics community. Further details on many of these efforts can be found in Part II of this report.

Mathematical modeling in the physical sciences, engineering, and information technology.

Development of algorithms and tools for high-performance mathematical computation.

Development and dissemination of mathematical reference information

To complement these activities, we engage in short-term consulting with NIST scientists and engineers, conduct a lecture series, and sponsor shortcourses and workshops in relevant areas. Information on the latter can be found in Part III of this report.

Technical Highlights

In this section, we highlight a few of the significant accomplishments of MCSD this past year. Further details on the technical accomplishments of the division can be found in Parts II and III.

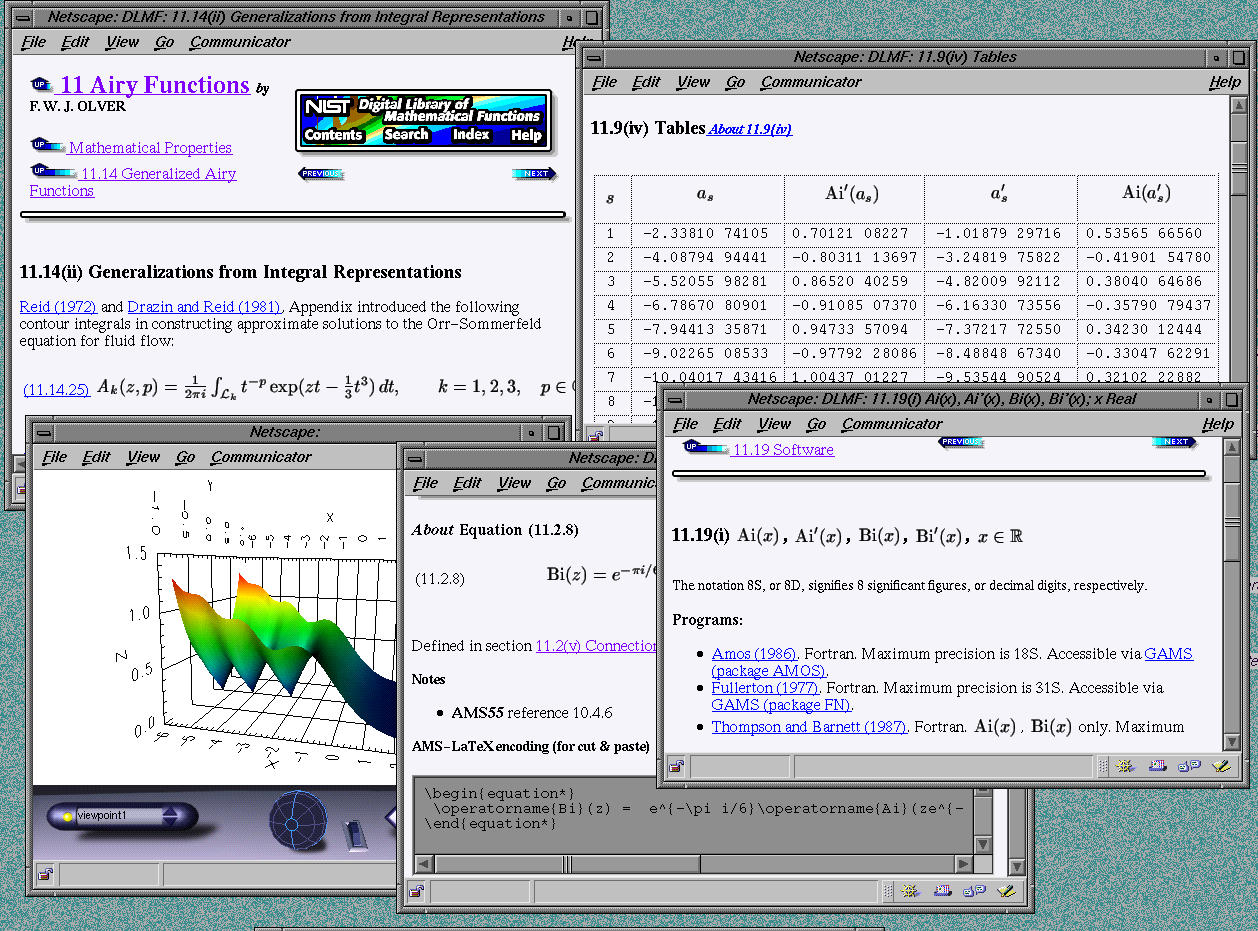

Digital Library of Mathematical Functions Receives NSF Support. In September 1999, MCSD received substantial funding from the National Science Foundation to support the development of the NIST Digital Library of Mathematical Functions (DLMF). The NIST proposal entitled Mathematical Foundations for a Networked Scientific Knowledge Base was awarded $1.3M over three years by the NSF Knowledge and Distributed Intelligence (KDI) program with support from the Division of Mathematical Sciences and the Division of Information and Intelligent Systems. The NIST award was one of 31 given by the KDI program in FY 1999, and the only one awarded to a non-academic institution.

The DLMF will provide NIST-certified reference data and associated information for the higher functions of applied mathematics. Such functions possess a wealth of highly technical and critically important properties that are used by engineers, scientists, statisticians, and others to aid in the construction and analysis of computational models in a variety of applications. The DLMF will deliver this data over the World Wide Web within a rich structure of semantic-based representation, metadata, interactive features, and internal/external links. It will support diverse user requirements such as simple lookup, complex search and retrieval, formula validation and discovery, automatic rule generation, interactive visualization, custom data on demand, and pointers to software and evaluated numerical methodology.

The DLMF was conceived as the successor for the NBS Handbook of Mathematical Functions (AMS 55), edited by M. Abramowitz and I. Stegun and published by NBS in 1964. AMS 55 is possibly the most widely distributed and cited NBS/NIST technical publication of all time. (The U.S. Govt. Printing Office has sold over 150,000 copies, and commercial publishers are estimated to have sold several times that number). The DLMF is expected to contain more than twice as much technical information as AMS 55, reflecting the continuing advances of the intervening 40 years.

The DLMF is being developed by a team of researchers in ITL and PL led by Daniel Lozier, Frank Olver, Charles Clark and Ron Boisvert. Additional support is being provided by MEL's Systems Integration for Manufacturing Applications (SIMA) program, TS's Standard Reference Data Program, and the ATP Adaptive Learning Systems program. NSF funding will be used to contract for the services of experts on mathematical functions to develop and validate the technical material that will make up the DLMF. The project is a substantial undertaking, with some 60 participants expected from outside NIST.

OOF Named Technology of the Year. In December 1999 Industry Week magazine named NIST as one of its 1999 Technology of the Year award winners for the development of OOF. OOF is an object-oriented finite-element system for the modeling of real material microstructures. It was created by Steve Langer of MCSD in collaboration with Andy Roosen and Ed Fuller of the NIST Materials Science and Engineering Laboratory (MSEL), and Craig Carter of MIT (formerly of MSEL). OOF shared the spotlight with technologies such as a roentgen high-resolution flat-panel display from IBM, and Toyotas Pirius hybrid gas/electric vehicle.

The OOF user begins with a realistic microstructural geometry by loading a two dimensional image of a real or simulated material into the program. The behavior of a material on the macroscopic scale depends to a large extent on its microstructure, the complex ensemble of polycrystalline grains, second phases, cracks, pores, and other features existing on length scales large compared to atomic sizes. OOF allows materials scientists to determine the influence of microstructure on a material's macroscopic properties through an easy-to-use graphical interface. Features in the image (e.g., grains, pores, and grain boundaries) are identified and assigned local material properties (e.g. crystalline symmetry and orientation, elastic constants, or thermal expansion coefficients). By applying stresses, strains, or temperature changes the user can measure the effective macroscopic material behavior, or can examine internal stress, strain, and energy density distributions. OOF currently handles only thermoelasticity, but extensions to other material properties are planned.

Beginning in FY2000 the OOF team will be cooperating with scientists at General Electric in a DOE-sponsored project to investigate the use of OOF as a quality control tool on the shop floor. It will be extended to simulate the performance of thermal barrier coatings for turbine blades.

Java Numerics Project Leads to Changes in Java. Java, a network-aware programming language and environment developed by Sun Microsystems, has already made a huge impact on the computing industry. Recently there has been increased interest in the application of Java to high performance scientific computing. Recommendations of a technical working group chaired by MCSD were instrumental this year in the adoption of a fundamental change in the way floating-point numbers are processed in Java. This change will lead to significant speedups to Java code running on Intel microprocessors like the Pentium. The recommendations were made by the Numerics Working Group of the Java Grande Forum (JGF), a consortium of industry, government, academia participants interested in the use of Java for high-performance computing applications. Ron Boisvert and Roldan Pozo co-chair the working group. Participants include IBM, Least Squares Software, Numerical Algorithms Group, Visual Numerics, The MathWorks, Syracuse University, the University of Tennessee at Knoxville, and the University of California at Berkeley.

All Java programs run in a portable environment called the Java Virtual Machine (JVM). The behavior of the JVM is carefully specified to insure that Java codes produce the same results on all computing platforms. Unfortunately, emulating JVM floating-point operations on the Pentium leads to a four- to ten-fold performance penalty. The Working Group studied an earlier Sun proposal, producing a counter-proposal which was much simpler, more predictable, and which would eliminate the performance penalty on the Pentium. Sun decided to implement the key provision of the Numerics Working Group proposal in Java 1.2, which was released in the spring of 1999.

Tim Lindholm, Distinguished Engineer at Sun, one of the members of the original Java project, the author of The Java Virtual Machine Specification, and currently one of the architects of the Java 2 platform said:

Our best attempts led to a public proposal that we considered a bad compromise and were not happy with, but were resigned to. ... The counterproposal was both very sensitive to the spirit of Java and satisfactory as a solution for the performance problem. ... We are sure that we ended up with a better answer for [Java] 1.2, and arrived at it through more complete consideration of real issues, because of the efforts of the Numerics Working Group.

The working group also advised Sun on the specification of elementary functions in Java, which lead to improvements in Java 1.3 which was released in the fall of 1999.

Advances in Blind Deconvolution. Alfred Carasso has made some remarkable early advances this year in so-called blind deconvolution. In this problem, one seeks to deblur an image without knowing the cause of the blur. This is a very difficult mathematical problem. Existing approaches have been iterative in nature, but are both computationally intensive and unreliable. Rather than attempting to solve the problem in its full generality, Carasso identifies a wide class of important blurs that includes and generalizes Gaussian and Lorentzian distributions (class G). Likewise, a large class of sharp images is characterized in terms of its behavior in the Fourier domain (class W). Based on empirical observations which have not previously been exploited, Carasso has shown how 1-D Fourier analysis of blurred image data can be used to detect class G point spread functions acting on class W images. A separate image deblurring technique uses this detected point spread function to deblur the image. Each of these two steps uses direct non-iterative methods, and requires interactive adjustment of parameters. With this approach, blind deblurring of 512x 512 images can be accomplished in minutes on current desktop workstations. The technique has been successfully applied to images from the NIST Physics Lab, as well as to a wide variety of test images. This work has just been written up, but it is already attracting wide interest.

Awards. Several staff members received significant awards this year in addition to the Industry Week Technology of the Year Award received by Stephen Langer and OOF.

Anthony Kearsley was one of two NIST staff members selected to receive the Presidential Early Career Award for Scientists and Engineers from the National Science and Technology Council. The award is given to those who, while early in their careers, show exceptional potential for leadership at the frontiers of scientific knowledge during the twenty-first century. He was cited for his work in the application of optimization methods in a wide variety of areas. (Roldan Pozo of MCSD won this award in 1996.) Later in the year, Kearsley received a joint invitation from the U.S. National Academy of Science and the Chinese Academy of Science to participate in the second annual Frontiers in Science Symposium, which was held in Beijing on August 20-22. This invitational symposium brought together outstanding American and Chinese scientists from many disciplines to discuss important technical challenges facing their fields.

In January 2000, the Association for Computing Machinery (ACM) named Ronald Boisvert as the winner of the 1999 Outstanding Contribution to ACM Award. Boisvert was cited for his "leadership and innovation as Editor-in-Chief of the ACM Transactions on Mathematical Software and his exceptional contributions to the ACM Digital Library project". ACM has honored 29 people for service to the society since the awards inception in 1976. Boisvert will receive the award at ACMs annual awards ceremony to be held in San Francisco in May 2000.

Technology Transfer. MCSD staff members continue to be active in publishing the results of their research. This year 32 publications appeared which were authored by Division staff, 21 of which appeared in refereed journals. Fourteen additional papers have been accepted for publication in refereed journals. Another 16 manuscripts have been submitted for publication and 27 are being developed.

MCSD staff members were also invited to give 40 lectures in a variety of venues and contributed another 19 talks at conferences and workshops. Two shortcourses where provided by MCSD for NIST staff this year. Isabel Beichl presented Non-numerical Methods for Scientific Computing and William Mitchell presented another on Fortran 90 for Scientists and Engineers. Both were very well attended. The Division lecture series remained active, with 26 talks presented (nine by MCSD staff members); all were open to NIST staff. In addition, MCSD staff members were involved in the organization of nine external conferences, workshops, and minisymposia.

Software continues to be a by-product of Division work, and the reuse of such software within NIST and externally provides a means to make staff expertise widely available. This year saw the initial release of the SciMark benchmark, a collection of Java applets and related software for measuring the performance of Java Virtual Machines for numeric-intensive computation. Faculty appointee G. W. Stewart issued the first release of Jampack, a Java package for numerical linear algebra. In contrast to the user-level Jama package released by the MathWorks and MCSD last year, Jampack is based upon a design that provides extensive features to linear algebra experts; it also supports complex matrices. Several existing MCSD software packages saw new releases this year, including OOMMF (micromagnetic modeling), f90gl (OpenGL graphics interface for Fortran 90), and TNT (Template Numerical Toolkit for numerical linear algebra in C++).

Web resources developed by MCSD continue to be among the most popular at NIST. The MCSD Web server at math.nist.gov has serviced more than 20 million Web hits since its inception in 1994 (7 million of which have occurred in the past year!) Altavista has identified more than 5,000 external links to the Division server. The NIST Guide to Available Mathematical Software (GAMS), a cross-index and virtual repository of mathematical software, is used more than 10,000 times each month. During a recent 36-month period, 34 prominent research-oriented companies in the .com domain registered more than 100 visits apiece to GAMS. The Matrix Market, a visual repository of matrix data used in the comparative study of algorithms and software for numerical linear algebra, sees more than 100 users each day. It has distributed more than 16 Gbytes of matrix data, including more than 64,000 matrices, since its inception in 1996.

Professional Activities. Division staff members continue to make significant contributions to their disciplines through a variety of professional activities. This year Daniel Lozier was elected chair of the SIAM Special Interest Group on Orthogonal Polynomials and Special Functions. Ronald Boisvert continues as a member of the ACM Publications Board.

Division staff members continue to serve on journal editorial boards. Ronald Boisvert serves as Editor-in-Chief of the ACM Transactions on Mathematical Software. Daniel Lozier is an Associate Editor of Mathematics of Computation and the NIST Journal of Research. Geoffrey McFadden is an Associate Editor of Journal of Computational Physics, SIAM Journal of Applied Mathematics, as well as the new journal Interfaces and Free Boundaries

Division staff members work with a variety of external working groups. Ronald Boisvert and Roldan Pozo chair the Numerics Working Group of the Java Grande Forum. Roldan Pozo chairs the Sparse Subcommittee of the BLAS Technical Forum. Michael Donahue and Donald Porter are members of the Steering Committee of muMag, the Micromagnetic Modeling Activity Group. Ronald Boisvert is a member of the International Federation of Information Processing (IFIP) Working Group 2.5 (Numerical Software) of Technical Committee 2 (Programming Languages).

Administrative Highlights

Four staff members and one postdoc left MCSDs ranks during this year. James Blue, leader of the Mathematical Modeling Group, retired in September 1999 after 20 years of service to NBS/NIST. John Gary, an MCSD staff member in Boulder, retired in December 1998 after more than 15 years of service to NBS/NIST. Both Blue and Gary have retained affiliation with MCSD as guest researchers. Janet Rogers, a 30-year NBS/NIST veteran in Boulder, retired in June 1999. She is pursuing a new career in economic modeling for the governor of Colorado. Karin Remington left in January 1999 to pursue a career in bioinformatics at Celera Genomics in Rockville, MD. Finally, Bruce Fabijonas completed his two-year tenure as a NRC postdoctoral appointee, taking a tenure track position in the Mathematics Department at Southern Methodist University.

David Sterling, who recently received a Ph.D. in mathematics from the University of Colorado, joined the NIST staff in Boulder in the fall of 1998 as a NRC postdoctoral appointee. In the fall we also made an offer to a recent Ph.D. to join the MCSD staff in Boulder. We expect this person to come on board in March 2000.

Finally, in late 1999 Joyce Conlon was transferred to MCSD from the ITL High Performance Systems and Services Division. She will be providing systems and programming support to a number of division projects.

Two MCSD staff members are currently on developmental assignments. Anthony Kearsley is spending half time at Carnegie-Mellon University during FY1999 and FY2000. He is working with researchers in the Computer Science Department there on applications of optimization methods to problems in networking and computer security. Bradley Alpert began a year visit at the Courant Institute of NYU where he is working with Leslie Greengard and colleagues on fast semi-analytic methods with applications in electromagnetic modeling.

Student Employment Program. MCSD provided support for seven student staff members on summer appointments during FY 1999. Such appointments provide valuable experiences for students interested in careers in mathematics and the sciences. In the process, the students can make very valuable contributions to MCSD programs. This years students were as follows.

|

Student |

Supervisor |

Project |

|

Elaine Kim Montgomery Blair High School Stanford University |

G. McFadden |

Effects of surface anisotropy on surface diffusion in materials science. A paper for submission to a technical journal is in process. |

|

Scott Safranek Montgomery Blair High School (Scott was a finalist in the 1999 Intel Talent Search) |

A. Kearsley |

Computational complexity and applications to gene structures. |

|

Phillip Yam Montgomery Blair High School |

I. Beichl |

Monte Carlo matrix multiplication with application to information retrieval. |

|

Brianna Blaser Carnegie Mellon University |

R. Boisvert |

Evaluation of the speed and accuracy of proposed Java elementary function libraries and development of benchmark codes for SciMark. |

|

Eden Crane Montgomery College |

F. Hunt |

Fractal dimension calculation of planar representations of DNA sequences, and Web presentation of computer graphic images from the reflectance competency. |

|

Melinda Sandler Carnegie Mellon University |

A. Kearsley |

Numerical algorithms for enhanced protein sequence identification. |

|

Galen Wilkerson University of Maryland |

R. Pozo |

Reference implementation of the sparse BLAS. |

Employee Survey. In the spring of 1999, NIST contracted with an independent firm to survey all employees, asking detailed questions regarding the NIST work environment. The results were aggregated at the NIST, Laboratory, and Division levels and presented to all NIST staff. For MCSD, the results showed general satisfaction with the Division-level work environment. Staff members feel that their colleagues are skilled, cooperative, and motivated. First line managers are supportive and responsive. Staff members understand what is expected of them, and have the tools and authority needed to carry out their jobs. The survey also uncovered some serious concerns with the research environment within the NIST Labs in general and ITL in particular. Several division meetings were held to discuss the concerns of staff. These culminated in the development of a Division action plan outlining steps that should be taken at the Division, Lab, and NIST level to address staff concerns. Several of these have begun to be implemented at the Division level. Division recommendations have been folded into a Laboratory level action plan.

Strategic Planning. This year MCSD initiated a formal strategic planning process. All staff members participated in several meetings in which several views of future technical issues affecting Division work were proposed and discussed. A consensus was obtained on major areas in which MCSD should play a role, and specific recommendations for future actions were laid out. The major trends identified as part of the strategic vision of the division are summarized below.

The ordinary industrial user of complex modeling packages has few tools available to assess the robustness, reliability, and accuracy of models and simulations. Without these tools and methods to instill confidence in computer generated predictions, the use of advanced computing and information technology by industry will lag behind technology development. NIST, as the nations metrology lab, is increasingly being asked to focus on this problem.

Research studies undertaken by laboratories like NIST are often outside the domain of commercial modeling and simulation systems. Consequently, there is a great need for the rapid development of flexible and capable research-grade modeling and simulation systems. Components of such systems include high-level problem specification, graphical user interfaces, real-time monitoring and control of the solution process, visualization, and data management. Such needs are common to many application domains, and re-invention of solutions to these problems is quite wasteful.

The availability of low-cost networked workstations will promote growth in distributed, coarse grain computation. Such an environment is necessarily heterogeneous, exposing the need for virtual machines with portable object codes. Core mathematical software libraries must adapt to this new environment.

All resources in future computing environments will be distributed by nature. Components of applications will be accessed dynamically over the network on demand. There will be increasing need for online access to reference material describing mathematical definitions, properties, approximations, and algorithms. Semantically rich exchange formats for mathematical data must be developed and standardized. Trusted institutions, like NIST, must begin to populate the net with such dynamic resources, both to demonstrate feasibility and to generate demand, which can ultimately be satisfied in the marketplace.

The NIST Laboratories will remain a rich source of challenging mathematical problems. MCSD must continually retool itself to be able to address needs in new application areas and to provide leadership in state-of-the-art analysis and solution techniques in more traditional areas. Many emerging needs are related to applications of information technology. Examples include VLSI design, security modeling, analysis of real-time network protocols, image recognition, object recognition in three dimensions, bioinformatics, and geometric data processing. Applications throughout NIST will require increased expertise in discrete mathematics, combinatorial methods, data mining, large-scale and non-standard optimization, stochastic methods, fast semi-analytical methods, and multiple length-scale analysis.

These issues, as well as proposed MCSD responses, are detailed in the MCSD Strategic Plan, available upon request. The MCSD plan provided input to the ITL Strategic Plan, which has just been finalized. MCSDs plans are consistent with those of the Laboratory and of NIST as a whole.

Olga Taussky Todd Conference. Several MCSD staff members participated in a symposium honoring Olga Taussky Todd, a mathematical pioneer and a former staff member of NBS, held July 16-18, 1999 at the Mathematical Sciences Research Institute in Berkeley, California. The symposium, entitled "The Olga Taussky Todd Celebration of Careers in Mathematics for Women" was organized and sponsored by the American Women in Mathematics (AWM). Besides honoring Taussky Todd (1906-1995), one of the century's most important American mathematicians, the symposium showcased contemporary women mathematicians whose careers mirror aspects of Taussky Todd's work. A third objective was to foster the careers of upcoming female mathematicians. During the 1950's, Taussky-Todd and her husband John Todd worked for the Institute for Numerical Analysis (INA), part of the Applied Mathematics Laboratory of the National Bureau of Standards housed on the campus of University of California at Los Angeles (UCLA). Isabel Beichl, an MCSD mathematician, and Dianne O'Leary, Professor of Computer Science at the University of Maryland and MCSD faculty appointee, were members of the organizing committee. Beichl also gave a presentation about Taussky Todd's years at NBS. MCSD mathematician Fern Hunt was a plenary speaker. In a talk entitled, "A Mathematician at NIST Today" she spoke about current interdisclipinary research on the light scattering properties of coated surfaces. All three staff members participated in the mentoring activities that were a uniquely engaging and lively part of the conference.

|

Part II Project Descriptions |

|

| Optical measurements of an automobile painted with gray metallic paint made at NIST are the basis for this graphical image rendered by Gregory Ward Larson. |

Mathematical Modeling Group

Computer Graphic Rendering of Material Surfaces

Fern Y. Hunt

Gary Meyer (University of Oregon)

Gregory Ward Larson (Silicon Graphics Inc.)

http://math.nist.gov/~FHunt/webpar4.html

For some years computer programs have produced images of scenes based on a simulation of scattering and reflection of light off one or more surfaces in the scene. In response to increasing demand for the use of rendering in design and manufacturing, the models used in these programs have undergone intense development. In particular more physically realistic models are sought (i.e. models that more accurately depict the physics of light scattering). However there has been a lack of relevant measurements needed to complement the modeling. As part of a NIST project entitled "Measurement Science for Optical Reflectance and Scattering" (

http://ciks.cbt.nist.gov/appearance/), Fern Hunt is coordinating the development of a computer rendering system that utilizes high quality optical and surface topographical measurements performed here at NIST. The systems will be used to render physically realistic and potentially photorealistic images. Success in this and similar efforts can pave the way to computer based prediction and standards for appearance that can assure the quality and accuracy of products as they are designed, manufactured and displayed for electronic marketing.Dr. Hunt is collaborating with Gary Meyer of the University of Oregon and Gregory Ward Larson of Silicon Graphics Inc. This work is part of a competence project on the appearance of painted and coated surfaces involving four laboratories, ITL, BFRL, MEL, and PL. In addition to the rendering work, Dr. Hunt has also been modeling the microstructure of metallic coatings using point processes.

Highlights for FY 1999 included the following.

Direct Blind Deconvolution

Alfred S. Carasso

Blind deconvolution seeks to deblur an image without knowing the cause of the blur. This is a difficult mathematical question that is not fully understood. For example, the 1D problem turns out to be harder than the 2D problem! So far, most approaches to blind deconvolution have been iterative in nature, but the iterative approach is generally ill behaved, often developing stagnation points or diverging altogether. When the iterative process is stable, a large number of iterations may be necessary to resolve fine detail.

The present approach does not attempt to solve the blind deconvolution in full generality. Rather, attention is focused on a wide class of blurs that includes and generalizes Gaussian and Lorentzian distributions, and has significant applications. This is the class G. Likewise, a large class of sharp images is exhibited and characterized in terms of its behavior in the Fourier domain. This is the class W. It is shown how 1-D Fourier analysis of blurred image data can be used to detect class G point spread functions acting on class W images. This approach is based on empirical observations about the class W that have not previously been exploited in the literature. A separate image deblurring technique uses this detected point spread function to deblur the image. Each of these two steps uses direct non-iterative methods, and requires interactive adjustment of parameters. As a result, blind deblurring of 512x 512 images can be accomplished in minutes of CPU time on current desktop workstations. This is considerably faster than what is currently feasible by iterative methods.

There is a great deal of interest in blind deconvolution at several U.S. Government Laboratories, including the Air Force Phillips Laboratory where tracking and monitoring of orbiting space objects is a major activity. Blind deconvolution is also an active field of research at several universities, including Minnesota, Stanford, and UCLA. At NIST, this approach has been successfully applied to improve images obtained from several types of electronic microprobes in use in the NIST Surface and Microanalysis Sciences Division.

Analysis of the blind problem within the class G has produced a number of interesting and unexpected results. These results can stimulate further research by other investigators. This work is totally original and has opened new avenues in this difficult subject. Current research aims at developing direct methods that can be applied to important types of blurs not included in G.

Machining Process Metrology, Modeling and Simulation

Timothy Burns

Matt Davies (NIST MEL)

Chris Evans (NIST MEL)

Jon Pratt (NIST MEL)

Tony Schmitz (NIST MEL)

This is a continuing collaboration on projects in high-speed milling and hard turning (turning hardened steels on a lathe) with researchers in MELs Automated Production Technology Division (APTD). The mission of APTD is to fulfill the measurements and standards needs of U.S. discrete-parts manufacturers in mechanical metrology and advanced manufacturing technology.

The high-speed milling work involves the development and application of new models of the dynamic interactions among tool, workpiece, and machine, in order to take full advantage of the material removal capabilities offered by state-of-the-art machines. Such processes are limited more by machine tool chatter than by catastrophic thermal tool failure. In particular, low-immersion cutting strategies that could minimize tool wear as well as provide surface finishes that do not require subsequent costly hand polishing are under investigation.

Traditional machine-tool chatter stability theory involves the study of functional differential equations. A typical practical problem involves the use of Laplace transform methods to map the boundaries in at least a two-dimensional parameter space (e.g., width of cut vs. cutting speed) between stable and unstable response regions for a given system. For very low-immersion cutting, this type of analysis breaks down because the tool is not in contact with the workpiece much of the time. This year, a simple model for low-immersion milling was developed, based on an impact-oscillator model. This has led to a much simpler stability analysis that has been experimentally verified for a specific system.

In a number of industries, including large manufacturers such as Boeing and Caterpillar, work is underway to evaluate the effectiveness of the use of large-scale finite-element software to assist in the prototyping of high-speed machining processes such as hard turning. APTD and MCSD are actively involved in this effort. While considerable progress has been made in the development of predictive models for low-strain-rate manufacturing processes, there is currently a need for improved predictive capabilities for high-rate processes. The accuracy of any model of a large-scale manufacturing operation is limited by how well it can predict the most basic components of the operation. Of these components, chip morphology is certainly the most fundamental, because it provides a direct record of the complex nonlinear plastic material flow process that determines the cutting forces and the temperature along the tool-material interface. These in turn have a dominant effect on tool wear as well as surface finish and subsurface damage of the workpiece.

Material strain hardening was added to the one-dimensional orthogonal cutting model of Davies, Burns, and Evans. It was shown that this could explain the chaotic transition from continuous to shear-localized chip formation that has been observed to take place with increasing cutting speed in a number of materials.

Collaboration with Carol Johnson and Howard Yoon of the Optical Technology Division in the NIST Physics Lab has been re-funded by ATP for FY99-00. This work has involved the development of a device to measure the temperature gradient across the thin region called the primary shear zone where metal cutting takes place in, for example, a hard-turning operation. The work has also involved the development of an experimental setup in MEL for the cutting experiments, and the acquisition and application of a commercial finite-element code by ITL. The software has been helpful in designing the experiments. Ultimately, if the experiments are successful, they will be useful for the validation of any finite-element simulations of high-speed metal-cutting operations.

Mathematical Modeling of Solidification

Geoffrey B. McFadden Mathematical modeling is frequently used to understand

and predict the properties of processed materials as a function of the

processing conditions by which the materials are formed. During crystal

growth of alloys by solidification from the melt, the homogeneity of the

solid phase is strongly affected by the prevailing conditions at the

solid-liquid interface, both in terms of the geometry of the interface and

the arrangements of the local temperature and solute fields near the

interface. Instabilities that occur during crystal growth can cause

spatial inhomogeneities in the sample that significantly degrade the

mechanical and electrical properties of the crystal. Considerable

attention has been devoted to understanding and controlling these

instabilities, which generally include interfacial and convective modes

that are challenging to model by analytical or computational means. A long-standing collaborative effort between the

Mathematical and Computational Sciences Division and the Metallurgy

Division has included support from the NASA Microgravity Research Division

as well as extensive interactions with university and industrial

researchers. In the past year a number of projects have been undertaken

that address outstanding issues in materials processing through

mathematical modeling. In collaboration with Sam Coriell (855), William

Boettinger (855), and Robert Sekerka (Carnegie Mellon University), the

dynamics of interfaces in layered geometries for multicomponent materials

were studied. These studies are motivated by diffusion problems in thin

films and junctions, where the nucleation and growth of additional

intermetallic phases can occur during processing. The studies are based on

finding similarity solutions that describe the dynamics and geometry of

the growing phases, which result in nonlinear equations that describe the

interface motion. An interesting outcome of the research is that multiple

solutions can be obtained that share the same initial conditions,

suggesting that the specific evolution of interfaces that are observed in

such systems may be highly sensitive to the details of the system

preparation prior to processing. NIST scientists have been in the forefront of the

development of solidification models that feature interfaces that are not

sharp, (having zero width), but instead are diffuse with a finite width

over which the phase transition occurs. John Cahn (850) pioneered models

for the spinodal decomposition of alloys (the Cahn-Hilliard equation) and

in the motion of phase boundaries in ordered materials (the Allen-Cahn

equation) that are based on such diffuse interface descriptions. Models of

this type are of computational as well as theoretical interest, since they

can form the basis for numerical schemes that can treat complicated free

boundary problems while avoiding the need to track the motion of the

interface explicitly. In collaboration with William Boettinger (855), Dan

Anderson (George Mason University) and Adam Wheeler (University of

Southampton), we have extended such models to include the effects of

convection in the melt. These models are being applied to simulate the

effects of convection during dendritic growth, where the models

capability to describe both convection and changes in interface topology

may allow the understanding of grain formation due to the transport of

dendrite fragments within the crystal. Other projects that have been undertaken this year

include work with Sam Coriell (855) and Robert Sekerka (CMU) on models of

dendritic growth, work with John Cahn (850), Adam Wheeler (University of

Southampton), and Richard Braun (University of Delaware) on diffuse

interface models of order-disorder transitions, work with Elaine Kim

(Stanford University undergraduate) on the effects of surface tension

anisotropy on the pinch-off of axisymmetric rods, work with Sam Coriell

(855) and Bernard Billia (University of Marseille) on models of Peltier

Interface Demarcation, and work with Sam Coriell (855), Bruce Murray

(University of Binghamton), and Alex Chernov (University Space Research

Associates) on models of step formation during facetted crystal

growth.

Daniel Anderson

Richard Braun

Bruce Murray

John Cahn (NIST MSEL)

Sam Coriell (NIST MSEL)

Bernard Billia (University of Marseille)

Alex Chernov (University Space Research Association)

Robert Sekerka (Carnegie-Mellon University)

Adam Wheeler (University of Southampton)

Micromagnetic Modeling

Michael Donahue

Donald Porter

James Blue

Robert McMichael (NIST MSEL)

http://math.nist.gov/oommf/

http://www.ctcms.nist.gov/~rdm/mumag.html

The engineering of such IT storage technology as magnetic recording media, GMR sensors for read heads, and magnetic RAM (MRAM) elements requires an understanding of magnetization patterns in magnetic materials at a submicron scale. Mathematical models are required to interpret measurements at this scale. The Micromagnetic Modeling Activity Group (muMAG) was formed to address fundamental issues in micromagnetic modeling through two activities: the definition and dissemination of standard problems for testing modeling software, and the development of public domain reference software. MCSD staff is engaged in both of these activities. Their Object-Oriented MicroMagnetic Framework (OOMMF) software package is a reference implementation of micromagnetic modeling software. Their achievements in this area since October 1998 include:

Software releases

Standard problems

Supporting the micromagnetics comminuty

Scientific contributions

Ongoing development

OOF: Finite Element Analysis of Material Microstructures

Stephen Langer

Edwin Fuller (NIST MSEL)

Andrew Roosen (NIST MSEL)

Craig Carter (MIT)

http://www.ctcms.nist.gov/oof/

The OOF Project, a collaboration between MCSD, MSEL's Ceramics Division, MIT, is developing software tools for analyzing real material microstructure. The microstructure of a material is the (usually) complex ensemble of polycrystalline grains, second phases, cracks, pores, and other features occurring on length scales large compared to atomic sizes. The goal of OOF is to use data from a micrograph of a real material to compute the macroscopic behavior of the material via finite element analysis. It was originally designed as a tool for industrial and academic researchers in materials science, but a group at General Electric has proposed using it to monitor the manufactured quality of the ceramic thermal barrier coatings on turbine blades. GE and NIST received a grant from DOE to develop OOF for this application. A number of universities have inquired about its possible use as a teaching tool.

In December 1999, OOF was named one of the 25 Technologies of the Year by Industry Week magazine. Other notable events of the last year include:

Optimization in Practice

Anthony Kearsley

Many important problems arising in science and engineering are often most conveniently handled by casting them as optimizations, to be solved with numerical algorithms implemented as computer programs. These practical problems frequently require optimization under complex constraints, involve large unmanageable matrices, and/or call for ad hoc procedures. Such pathologies have not yet been systematically analyzed and classified.

We are currently concerned with the development and analysis of algorithms for the solution of such problems as they are encountered at NIST. These specialized and uniquely structured problems of estimation, simulation and control of complex systems have surfaced in a variety of areas such as the modeling of

Of particular interest are nonlinear optimization problems that involve computationally intensive function evaluations. The problems are ubiquitous; they arise in simulations with finite elements, in making statistical estimates, or in simply dealing with functions difficult to handle. In many such examples, we have been successful by comparing choices in the implementation of optimization algorithms. In asking, "What makes one formulation for the solution of a problem more desirable than another?" we study the delicate balance among the choices of mathematical approximation, computer architecture, data structures and other factors. For the solution of many applications-driven problems, the proper balance of these factors is crucial.

Time-Domain Modeling Algorithms for the Design of Electromagnetic Devices

Bradley Alpert

Leslie Greengard (New York University)

Thomas Hagstrom (University of New Mexico)

Radiation and scattering of acoustic and electromagnetic waves are increasingly modeled using time-domain computational methods, which incrementally advance a solution in time according to the governing differential equations. This general approach is receiving increasing attention due its promise for modeling wide-band signals, material inhomogeneities, and device nonlinearities. The applications requiring these capabilities include the design of antennas, low-visibility aircraft, microcircuits, and transducers, nondestructive testing of mechanical devices, and medical and geophysical imaging, among others.

For many of these applications, the accuracy of the computed models is of central importance. Nevertheless, existing methods typically allow for only limited control over accuracy and cannot achieve high accuracy for reasonable computational cost. The goal of this project is the development of algorithms and codes for high-accuracy time-domain computations, addressing the key weaknesses of existing methods. These include accurate nonreflecting boundary conditions (that reduce an infinite physical domain to a finite computational domain), suitable geometric representations of scattering objects, and rapidly convergent, stable spatial and temporal discretizations for realistic scatterer geometries.

This project in prior years has already achieved a major advance in applying the exact nonreflecting boundary conditions (which are global in space and time). An integral equation formulation of the boundary conditions has led to a scheme whose computational cost is O(N log N) in two dimensions and O(N2 log N) in three dimensions, for a domain N wavelengths across, as compared with O(N2) and O(N3), respectively, for the interior grid work.

This year another significant advance was achieved, which is expected to eliminate the "small cell" problem for explicit, high-order marching. When a nontrivial scatterer meets a Cartesian discretization, small cells are inevitably created and generally lead to instabilities in explicit time evolution schemes. Historically, this fact has forced a choice between the undesirable options of implicit time marching and elaborate mesh generation, and sometimes both of these options have been employed to get stability with high-order convergence. A new integral evolution formula for the wave equation was discovered this year that, quite simply, leads to explicit, high-order discretizations that appear immune to the small cell problem. An implementation in one spatial dimension has been reported; implementations in two and three dimensions are under development.

Tools for Genome Sequence Analysis

Fern Hunt

Antti Pesonen

As part of an exploratory project in bioinformatics funded by the NIST Information Technology Laboratory, Fern Hunt and guest researcher Antti Pesonen have developed a software tool that can be used to investigate statistical and complexity patterns of DNA sequences. The selection of features was motivated firstly by their potential usefulness in answering questions that are frequently addressed in the bioinformatics literature, and secondly by their amenability to graphical display and visualization. Production level sequencing of entire genomes (e.g. bacterial genomes, all 16 chromosomes of yeast) has become routine for simpler organisms, and very rapid progress is being made in the sequencing of the genomes of higher organisms including human beings. These efforts have led to DNA sequences of from several hundred thousand bases for a virus genome, to dozens of millions for a single human chromosome. Consequently, our goal has been to integrate quantitative and visual methods very closely.

As part of an effort to develop tests and to characterize probability models of DNA sequences, the program GenPatterns displays subsequence frequencies and their patterns of recurrence from a sliding window applied to sequence data. Bacterial genomes and chromosome data can be downloaded from GENBANK. A color-coded matrix represents the histogram of subsequence frequencies. The display is based on a tensor product of a two by two matrix based on the four DNA bases A, C, T, G. There are zoom and navigation features that allow one to move through a large data set and remain oriented so that one can investigate the prevalence of specific longer sequences e.g. oligomers (length eight), that are of biological significance.

A great deal of work has been done on modeling DNA sequences as the sample path of a stochastic process. Despite the theoretical difficulty of viewing sequence data as stationary, these models have been fairly successful in gene identification and location of so-called binding sites in mRNA, a sequence quite similar to DNA. GenPatterns has a feature that allows one to compare the model to the data in various ways. A color-coded matrix histogram can be used to visually assess the predicted versus actual subsequence frequencies. There are also provisions for numerical comparison that are under development. The program allows comparison to be made with data and Markov models of arbitrary order. These are expected to be useful in modeling local features of selected parts of the data. There are also features based on the common practice of mapping DNA to paths that are analogous to random walks. This is very useful for, although DNA sequences resemble real random sequences, they differ in significant respects, and this class of tools provides a convenient visual distinction. Quantitatively speaking, this distinction is the basis of methods based on statistical significance and maximum likelihood commonly used in computational biology.

Mathematical Software Group

Digital Library of Mathematical Functions

Daniel Lozier

Ronald Boisvert

Marjorie McClain

Bruce Miller

F. W. J. Olver

Bonita Saunders

Bruce Fabijonas

Qming Wang (NIST ITL)

Charles Clark (NIST PL)

Peter Mohr (NIST PL)

David Penn (NIST PL)

http://math.nist.gov/DigitalMathLib/

NIST/NBS is known for providing standard reference data in physical sciences and engineering. Less well known is the fact that NIST also supplies reference data in mathematics. The prime example is the NBS Handbook of Mathematical Functions, prepared under the editorship of Milton Abramowitz and Irene Stegun and published in 1964 by the U.S. Government Printing Office. The NBS Handbook is a technical best seller, and likely is the most frequently cited of all technical references. Total sales to date of the government edition exceed 150,000; further sales by commercial publishers are several times higher. Its daily sales rank on amazon.com consistently surpasses other well-known reference books in mathematics, such as Gradshteyn and Ryzhik's Table of Integrals. The number of citations reported by Science Citation Index continues to rise each year, not only in absolute terms but also in proportion to the total number of citations. Some of the citations are in pure and applied mathematics but even more are in physics, engineering, chemistry, statistics, and other disciplines. The main users are practitioners, professors, researchers, and graduate students.

Except for correction of typographical and other errors, no changes have ever been made in the Handbook. This leaves much of the content unsuitable for modern usage, particularly the large tables of function values (over 50% of the pages), the low-precision rational approximations, and the numerical examples that were geared for hand computation. Also, numerous advances in the mathematics, computation, and application of special functions have been made or are in progress. We have begun a project to transform this old classic radically. The Digital Library of Mathematical Functions: A New Web-Based Compendium on Special Functions will be published on the Internet and in a book edition with CD-ROM. The Internet edition will be provided free-of-charge from a NIST Standard Reference Data Web Site. The Web Site and CD-ROM will include capabilities for searching, downloading, and visualization.

Funded by the National Science Foundation and NIST, the DLMF Project is contracting with the best available world experts to rewrite all existing chapters, and to provide additional chapters to cover new functions (such as the Painlevé transcendents) and new methodologies (such as computer algebra). The work is being supervised by four NIST editors (Lozier, Olver, Clark, and Boisvert) and an international board of ten associate editors. The associate editors are

Richard Askey (University of Wisconsin),

Michael Berry (University of Bristol),

Walter Gautschi (Purdue University),

Leonard Maximon (George Washington University),

Morris Newman (University of California at Santa Barbara),

Peter Paule (Technical University of Linz),

William Reinhardt (University of Washington),

Ingram Olkin (Stanford),

Nico Temme (CWI Amsterdam), and

Jet Wimp (Drexel University).

Major achievements since the beginning of FY 1999, are as follows:

Fortran 90 Bindings for OpenGL

William Mitchell

The Fortran 90 bindings for the OpenGL graphics library were developed by MCSD, and have been adopted by the OpenGL Architecture Review Board (ARB) as the official OpenGL Fortran 90 interface. OpenGL is a software interface for applications to generate interactive 2D and 3D computer graphics. OpenGL is designed to be independent of operating system, window system, and hardware operations, and is supported by many vendors, with products for computing platforms from PCs to supercomputers. The ARB is the governing board of OpenGL, and consists of members from a variety of companies with OpenGL products including ATI, Compaq, Evans & Sutherland, Hewlett-Packard, IBM, Intel, Intergraph, Microsoft, NVIDIA and Silicon Graphics.

In conjunction with this work, Mitchell has developed f90gl, a public domain reference implementation of the Fortran 90 bindings. Since its initial release three years ago, it has been downloaded approximately 5000 times. Several vendors have begun using f90gl as part of their products or providing access to f90gl from their product web pages, including Absoft, Compaq, Interactive Software Services, Lahey, and N. A. Software.

Highlights for this year include:

Information Services for Computational Science: GAMS, Matrix Market

Ronald Boisvert

Bruce Miller

Marjorie McClain

Roldan Pozo

Karin Remington

http://math.nist.gov/gams/

http://math.nist.gov/MatrixMarket/

MCSD continues to provide Web-based information resources to the computational Science research community. The first of these is the Guide to Available Mathematical Software (GAMS). GAMS is a cross-index and virtual repository of some 9,000 mathematical and statistical software components of use in science and engineering research. It catalogs software, both public domain and commercial, that are supported for use on NIST central computers by ITL, as well as software assets distributed by netlib. While the principal purpose of GAMS is to provide NIST scientists with information on software available to them, the information and software it provides are of great interest to the public at large. GAMS users locate software via several search mechanisms. The most popular of these is the use of the GAMS Problem Classification System. This system provides a tree-structured taxonomy of standard mathematical problems that can be solved by extant software. It has also been adopted for use by major math software library vendors.

A second resource provided by MCSD is the Matrix Market, a visual repository of matrix data used in the comparative study of algorithms and software for numerical linear algebra. The Matrix Market database contains more than 400 sparse matrices from a variety of applications, along with software to compute test matrices in various forms. A convenient system for searching for matrices with particular attributes is provided. The web page for each matrix provides background information, visualizations, and statistics on matrix properties.

This year we purchased a two new Division server systems, both Sun UltraSPARC 60s. Considerable effort was expended in converting our existing setup from SunOS 4.1 to the new Solaris 2 servers. Our main server, math.nist.gov, was placed in the NIST central computing facility on the new external network (outside the new NIST firewall), while the native GAMS database server was placed on a new internal server, math-i.nist.gov. A mirroring facility was set up to automatically all static Division web pages from the internal server to the external server. Finally, a new system for maintaining server logs was developed and implemented.

The Web resources developed by MCSD continue to be among the most popular at NIST. The MCSD Web server at math.nist.gov has serviced more than 20 million Web hits since its inception in 1994 (7 million of which have occurred in the past year!) Altavista has identified more than 5,000 external links to the Division server. GAMS is used more than 10,000 times each month. During a recent 36-month period, 34 prominent research-oriented companies in the .com domain registered more than 100 visits apiece to GAMS. The Matrix Market sees more than 100 users each day. It has distributed more than 16 Gbytes of matrix data, including more than 64,000 matrices, since its inception in 1996.

The utility of these systems is demonstrated by their adoption in other contexts. This year, for example, several new numerical analysis textbooks recommend use of GAMS to their students:

Waterloo Maple Inc. is planning to include support for I/O of Matrix Market format matrices in the next release of Maple.

Java Numerics

Ronald Boisvert

Bruce Miller

Roldan Pozo

Karin Remington

G.W. Stewart

http://math.nist.gov/javanumerics/

http://math.nist.gov/scimark/

Java, a network-aware programming language and environment developed by Sun Microsystems, has already made a huge impact on the computing industry. Recently there has been increased interest in the application of Java to high performance scientific computing. MCSD is participating in the Java Grande Forum (JGF), a consortium of companies, universities, and government labs who are working to assess the capabilities of Java in this domain, and to provide community feedback to Sun on steps that should be taken to make Java more suitable for large-scale computing. The JGF is made up of two working groups: the Numerics Working Group and the Concurrency and Applications Working Group. The former is co-chaired by R. Boisvert and R. Pozo of MCSD. Among the institutions participating in the Numerics Working Group are: IBM, Intel, Least Squares Software, NAG, Sun, Visual Numerics, Syracuse University, the University of Karlsruhe, the University of North Carolina, and the University of Tennessee at Knoxville.

This year, MCSD staff presented the findings of the Working Group in a variety of forums, including

In June 1999, MCSD issued a second report on Working Group recommendations. Comments on these recommendations formed the bulk of a keynote address presented by Bill Joy at the ACM Java Grande Conference in San Francisco later that month. Joy, the developer of Berkeley Unix, co-founder of Sun Microsystems, and co-author of the original Java specification, endorsed the work of the JGF, as well as most of the recommendations of the Numerics Working Group.

Earlier recommendations of the Numerics Working Group were instrumental in the adoption of a fundamental change in the way floating-point numbers are processed in Java. This change will lead to significant speedups to Java code running on Intel microprocessors like the Pentium. All Java programs run in a portable environment called the Java Virtual Machine (JVM). The behavior of the JVM is carefully specified to insure that Java codes produce the same results on all computing platforms. Unfortunately, emulating JVM floating-point operations on the Pentium leads to a four- to ten-fold performance penalty. The Working Group studied an earlier Sun proposal, producing a counter-proposal which was much simpler, more predictable, and which would eliminate the performance penalty on the Pentium. Sun decided to implement the key provision of the Numerics Working Group proposal in Java 1.2, which was released in the spring of 1999.

Tim Lindholm, Distinguished Engineer at Sun, one of the members of the original Java project, the author of The Java Virtual Machine Specification, and currently one of the architects of the Java 2 platform said:

Our best attempts led to a public proposal that we considered a bad compromise and were not happy with, but were resigned to. ... The counterproposal was both very sensitive to the spirit of Java and satisfactory as a solution for the performance problem. ... We are sure that we ended up with a better answer for [Java] 1.2, and arrived at it through more complete consideration of real issues, because of the efforts of the Numerics Working Group.

The working group also advised Sun on the specification of elementary functions in Java, which lead to improvements in Java 1.3 which was released in the fall of 1999. The Numerics Working Group has now began work on a series of formal Java Specification Requests for language extensions

Also this year, Roldan Pozo and Bruce Miller developed an interactive numerical benchmark to measure the performance of scientific codes on Java Virtual machines running various platforms. The SciMark 2.0 benchmark includes computational kernels for FFTs, SOR, Monte Carlo integration, sparse matrix multiply, and dense LU factorization, comprising a representative set of computational styles commonly found in numeric applications. Several of SciMark's kernels have been adopted by the benchmarking effort of the Java Grande Forum.

SciMark can be run interactively from Web browsers, or can be downloaded and compiled for stand-alone Java platforms. Full source code is provided. The SciMark 2.0 result is recorded as megaflop rates for the numerical kernels, as well as an aggregate score for the complete benchmark. The current database lists results for over 800 computational platforms, from laptops to high-end servers. As Of January 2000, the record for SciMark 2.0 is over 76 Mflops. We are in the process of adding full applications to expand the SciMark suite.

Finally, G. W. Stewart released the first version of Jampack, a Java package for numerical linear algebra. Jampack provides an alternative design to the JAMA package issued by the MathWorks and NIST last year. While JAMA is designed for the non-expert user, Jampack provides a variety of facilities of interests to experts and others who may want to extend the package. In addition, it supports complex matrices.

JazzNet: Cost-Effective Parallel Computing

Roldan Pozo

William Mitchell

Several years ago, NIST was among some of the first institutions looking into low-cost parallel computing using commodity parts and operating systems. We built several PC clusters using the Linux operating system and fast-ethernet and Myrinet networking technologies. We put real applications on these machines and studied performance/cost trade-offs. The goal was to demonstrate to industry that such configurations were practical computing solutions, not just of interest to the computer science research community. Today, Linux clusters are not only commonplace in industry, but have actually become the dominant product being offered by several hardware vendors.

In conjunction with this effort, William Mitchell has been investigating the best software environments to utilize these architectures. Currently, he has evaluated nearly every Fortran 90/95 Linux compiler available and has conducted extensive benchmarking, reliability, completeness, and cost analysis.

More information on the JazzNet Linux cluster, applications, and available software is available at the project website.

Parallel Adaptive Refinement and Multigrid Finite Element Methods

William Mitchell

Finite element methods using adaptive refinement and multigrid techniques have been shown to be very efficient for solving partial differential equations on sequential computers. Adaptive refinement reduces the number of grid points by concentrating the grid in the areas where the action is, and multigrid methods solve the resulting linear systems in an optimal number of operations. W. Mitchell has been developing a code, PHAML, to apply these methods on parallel computers. The expertise and software developed in this project are useful for many NIST laboratory programs, including material design, semiconductor device simulation, and the quantum physics of matter.

Much of the advances this year was in the grid-partitioning algorithm. An adaptively refined grid must be partitioned for distribution over the processors in such a way that the processor load is balanced and communication between processors is minimized. Mitchell has developed the K-Way Refinement Tree (RTK) partitioning method to quickly find a high quality partition by using information contained in a tree representation of the refinement process.

This work has lead to a collaborative effort with Sandia National Laboratories to develop Zoltan, a dynamic load-balancing library. Mitchell has designed and implemented a Fortran interface for the C library, and contributed the RTK method. PHAML has been modified to (optionally) use Zoltan as the grid partitioner, providing an application testbed to compare different load balancing methods. In addition, PHAML has been enhanced to solve a larger class of problems by adding the capabilities of non-constant coefficients, general boundary conditions, time dependence, nonlinearity, and coupled systems. These allow it to be used to solve application problems in collaboration with NIST scientists.

Sparse BLAS Standardization

Roldan Pozo

http://math.nist.gov/spblas/NIST is playing a leading role in the new standardization effort for the Basic Linear Algebra Subprograms (BLAS) kernels for computational linear algebra. The BLAS Technical Forum (BLAST) is coordinating this work. BLAST is an international consortium of industry, academia, and government institutions, including Intel, IBM, Sun, HP, Compaq/Digital, SGI/Cray, Lucent, Visual Numerics, and NAG.

One of the most anticipated components of the new BLAS standard is support for sparse matrix computations. Roldan Pozo chairs the Sparse BLAS subcommittee. NIST was first to develop and release a public domain reference implementations for early versions of the standard, which has helped shape the proposal which released for public review this year.

Support of the NIST Computing Environment

R. Boisvert

J. Conlon

W. Mitchell

(Division Staff)

Division staff members continue to contribute to the high quality scientific computing environment for NIST scientists and engineers via short-term consulting related to mathematics, algorithms and software, and by the support of software libraries on central systems. Division staff maintains a variety of research-grade public-domain math software libraries on the NIST Central Computers (SGI Origins, IBM SP2), as well as for NFS mounting by individual workstations. Among the libraries supported are the NIST Core Math Library (CMLIB), the SLATEC library, the NIST Standards Time Series and Regression Package (STARPAC), and LAPACK. These libraries, as well as a variety of commercial libraries implemented on NIST central systems, are cross-indexed for ease of discovery by NIST staff in the Guide to Available Mathematical Software, along with many other such resources, by MCSD.

This year, we continued our efforts to implementing these libraries on the new NIST SGI Origin 2000 systems (in most cases many versions of the libraries are maintained to support a variety of compilation modes). We also migrated our implementations of CMLIB and LAPACK to ITLs centralized software checkout service to ease the process of mounting libraries on workstations distributed throughout the Institute. This work is supported by the ITL High Performance Systems and Services Division.

TNT: Object Oriented Numerical Programming

Roldan Pozo

NIST has a history of developing some of the most visible object-oriented linear algebra libraries , including Lapack++, Iterative Methods Library (IML++), Sparse Matrix Library (SparseLib++), Matrix/Vector Library (MV++), and most recently the Template Numerical Toolkit (TNT).

TNT incorporates many of the ideas we have explored with previous designs, and includes new techniques that were difficult to support before the ANSI C++ standardization. The library includes support for both C and Fortran array layouts, array sections, basic linear algebra algorithms (LU, Cholesky, QR, and eigenvalues) as well as primitive support for sparse matrices.

TNT has enjoyed several thousand downloads and is currently in use in several industrial applications.

Optimization and Computational Geometry Group

Computing the Partition Function

Isabel Beichl

Dianne O'Leary

Francis Sullivan (IDA Center for Computing Sciences)

A central issue in statistical physics is that of evaluating the partition function which describes the probability distribution of states of some system of interest. In a number of important settings, including the Ising model, the q-state Potts model, and the monomer-dimer model, no closed form expressions are known for three-dimensional cases. In addition, obtaining exact solutions of the problems is known to be computationally intractable.

At NIST, related problems occur in the materials science theory group, the combinatorial chemistry working group, and, in physics, models for the Bose-Einstein condensate can be formulated as a dimer problem.

Many of these computations can be formulated as combinatorial counting problems for which the Monte Carlo Markov Chain (MCMC) method can be formulated to give approximate solutions. In some cases, the Markov Chain is rapidly mixing so that, in principle at least, a pre-specified arbitrary accuracy can be obtained in only a polynomial number of steps. In practice, however, the wall-clock computing time is prohibitively long and hardware bounds on precision can limit accuracy for native mode arithmetic.

A class of probabilistic importance sampling methods has been developed for these problems that appears to be much more effective than the standard MCMC technique for attacking these problems. The key to the approach is the use non-linear iterative methods, such as Sinkhorn balancing, to construct an extremely accurate importance function. Because importance sampling gives unbiased estimates, the importance function can be adjusted so that the simulation spends more time in the more complex regions of state space.

Recent accomplishments include the following.

Terrain Modeling

Christoph Witzgall

Javier Bernal

Marjorie McClain

William Stone (BFRL)

G. Choek (BFRL)

Robert Lipman (BFRL)

David Gilsinn (MEL)

J. Lavery (ARO)

Since 1986, C. Witzgall, J. Bernal, and M. McClain, with Witzgall as project leader, have investigated, implemented, and disseminated methods for representing terrain by triangulated surfaces or "TINs" (= Triangulated Irregular Networks). The work utilizes Delaunay triangulations and variations thereof. Part of this work has been supported by the Army Corps of Engineers and by DARPA.

Current work for the Topographic Engineering Laboratory (TEC) of the Army Corps of Engineers is to document editing and "thinning" methods for hydrographic data and corresponding surface generation. Emphasis is on defining the boundaries of sets of data points and identifying areas of missing data.

In 1999, collaboration started with NIST BFRL on applying TIN techniques to LIDAR scans of construction/excavation sites. Such scanning technology is being considered as a component of systems for automatically monitoring construction progress. Witzgall and Bernal are co-authors, together with lead author G.S. Cheok, R.R. Lipman, and W.C. Stone, of the forthcoming "NIST Construction Automation Program Report No. 4: Non-Intrusive Scanning Technology for Construction Status Determination". The challenges encountered were noise abatement and the combination of scan data taken from different viewpoints.

Witzgall is experimenting with methods for screening and filtering noisy scan data and subsequent numerical "registration (coordinate adjustment) for improved coincidence of separate data collections.

It also became apparent that filtering and surface generation should be undertaken in terms of the separate polar coordinate systems centered at the respective scanner locations rather than forming the union of separate data clouds and filtering that combination prior to representing the combined data set by a surface. There is furthermore a need to extend TIN techniques to surfaces which are not "elevated", that is, have a one-to-one projection into a footprint plane, since some surfaces on construction sites can be expected to fall into a more general category. Both of the above tasks require the ability to manipulate and intersect general triangulated surfaces in space. Bernal is developing algorithms for such manipulation based on "tetrahedralization" of data points in space. That technique is also capable of identifying spatial "cut" and "fill" regions and their volumes as defined by the intersection of the prevailing "terrain surface" with a planned "design surface". It is felt that tetrahedralization techniques will attract increasing attention because of their ability to represent proximity relationships in space.

In a separate development, Witzgall and D. Gilsinn (NIST MEL), are collaborating with J. Lavery of the Army Research Office, Durham, about applications to terrain modeling of the nonoscillatory splines pioneered by Lavery.

|

Part III Activity Data |

A collection of windows from the Digital Library of Mathematical Functions.

Publications

Presentations

Conferences, Workshops and Lecture Series

Shortcourses

MCSD Seminar Series

DLMF Seminar Series

Conference/Workshop Organization

Electronic Resources Developed and Maintained

External Contacts

MCSD staff members enjoy many contacts with colleagues in industry, government, and academia. The purpose of such contacts is quite varied. In some cases, we are providing short-term consulting related to the R&D output of the division. In other cases, an external colleague is providing expertise by participating in a NIST project. The following is an (incomplete) list of institutions with which we have interacted recently.

Industrial Labs

|

Advanced Network Consultants, Inc. ARACOR Civilized Software Compaq Conoco General Electric Corporate Research General Pneumatics GTE Speech and Language Research Hewlett-Packard Labs IBM Research Interactive Software Services |

ISCIENCES, Inc. Lahey Least Squares Software LG Electronics Lucent Technology Maxtor Corporation Merck Pharmaceuticals Mobil Research Moldyn, Inc. Molecular Mining Corporation MTS Systems Corp. |

Numerical Algorithms Group SAIC Scriptics Corporation Silicon Graphics, Inc. SONIX, Inc. Sun Microsystems Texas Instruments Tydeman Consulting The Institute for Genomic Research (TIGR) The MathWorks United Technologies Visual Numerics |

Government / Non-profit Organizations

|

American Mathematical Society (AMS) Association for Computing Machinery (ACM) Air Force Office of Scientific Research Air Force Philips Laboratory Army Corps of Engineers Army Research Office CWI Amsterdam DARPA |

IDA Center for Computing Sciences Lawrence Berkeley Labs Mathematics Association of America (MAA) NASA NASoftware, Ltd. National Radio Astronomy Observatory National Science Foundation NIH |